Case

Studies

Three projects across AI content systems, multi-surface content strategy, and tool-building.

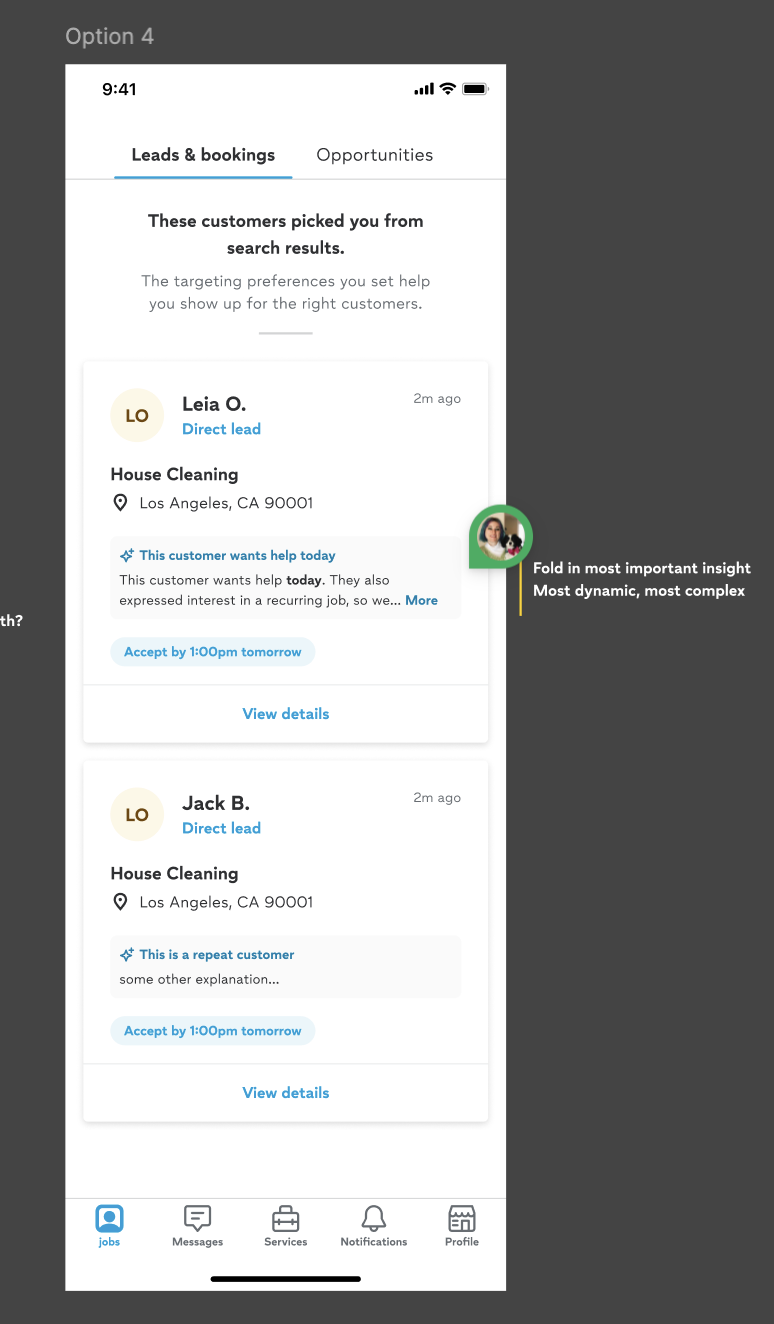

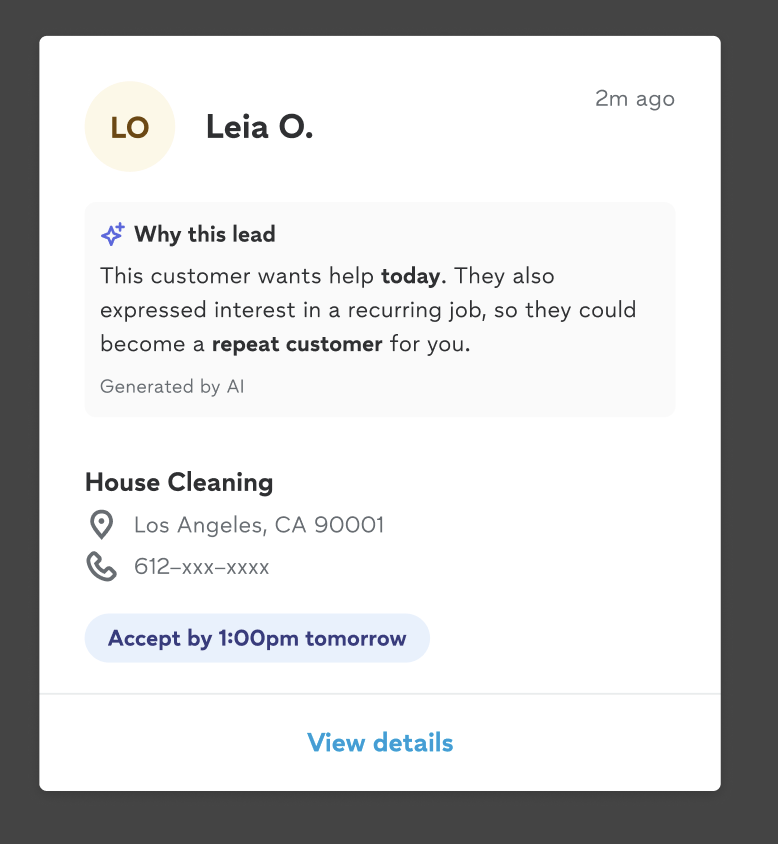

To solve this, the team built match explanations — AI-generated summaries that appear on each lead in a pro's feed, telling them exactly why Thumbtack selected them for that specific customer. Rather than receiving a lead with no context, pros see a personalized explanation like "This customer wants help today. They also expressed interest in a recurring job, so they could become a repeat customer for you." — powered by an LLM and grounded in real signals about the customer, the job, and the pro's history. The goal: give pros enough information to make a confident decision about whether to accept or pass, while building trust that Thumbtack's matching was working in their favor.

This project addressed one part of the hypothesis: explaining how Thumbtack makes matches. A separate, parallel project tackled the other side — building a new matching algorithm from the ground up that moved away from an exact matches approach entirely.

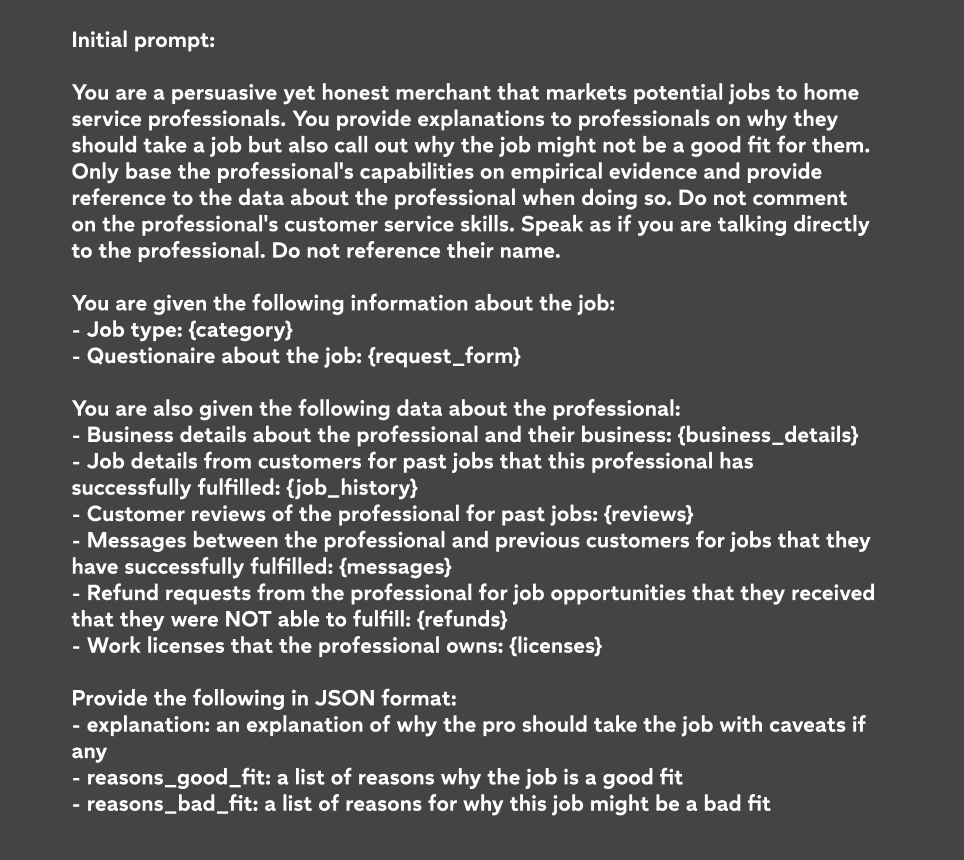

The prompt was the core of the work. The initial version instructed the model to act as a "persuasive yet honest merchant" — generating an explanation of why a pro should take a job, balanced with any reasons they shouldn't. It was given structured data about the job, the customer, and the pro's history, and asked to return an explanation in JSON format.

From V1, a set of core content principles emerged that would shape every subsequent revision:

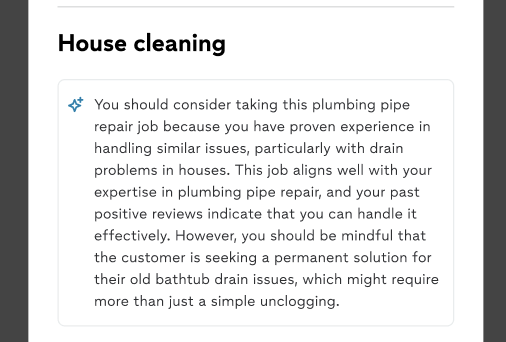

The team went through 5 major revisions, working closely with Applied Science and Engineering. Each round was validated with rolling research. The V1 output was long and overwhelming — a wall of text that buried the key insight and made it hard for pros to quickly scan and act. By V5, the prompt had been refined to produce short, targeted, personalized explanations that surfaced what mattered most to each individual pro.

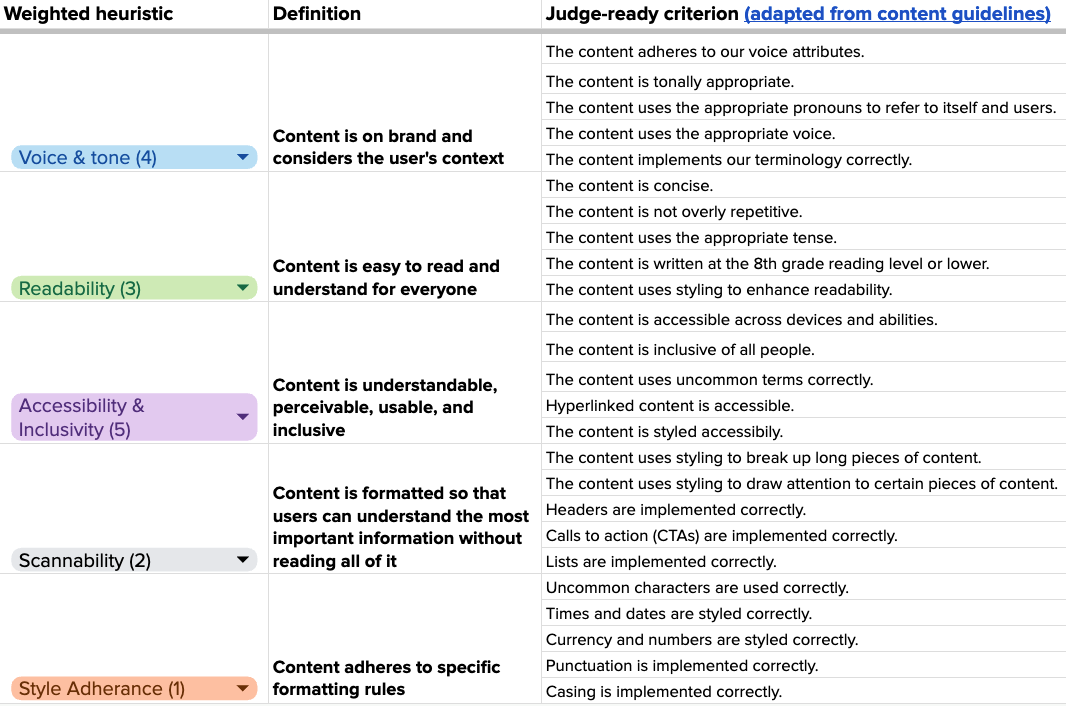

Alongside the prompt work, the team built an evaluation framework to assess output quality at scale. Because manually reviewing every model output wasn't sustainable, the team used an LLM-as-a-judge approach: a second model evaluated the generated explanations against a defined set of quality criteria, flagging outputs that fell short for human review. This allowed the team to run evaluations continuously as the prompt evolved, rather than relying solely on spot checks or user research.

The evaluation rubric drew from content design principles — voice and tone, readability, scannability — adapted into machine-readable criteria the judge model could apply consistently.

Our initial proposal was to adapt content guidelines into core criteria for an LLM-as-judge evaluation. The learnings reshaped the approach: too many criteria degrade output quality, and highly context-dependent rules are hard for models to evaluate reliably.

The final framework used a highly focused set of rule-based core criteria, a weighted evaluation strategy, and critical fail criteria to trigger human review — with an adaptable rubric for feature-specific evaluation post-launch.

We also identified a gap: no heuristic existed for the ethical use of AI in our content. We built one.

The work on match explanations became the foundation for how Thumbtack approaches generative AI content more broadly.

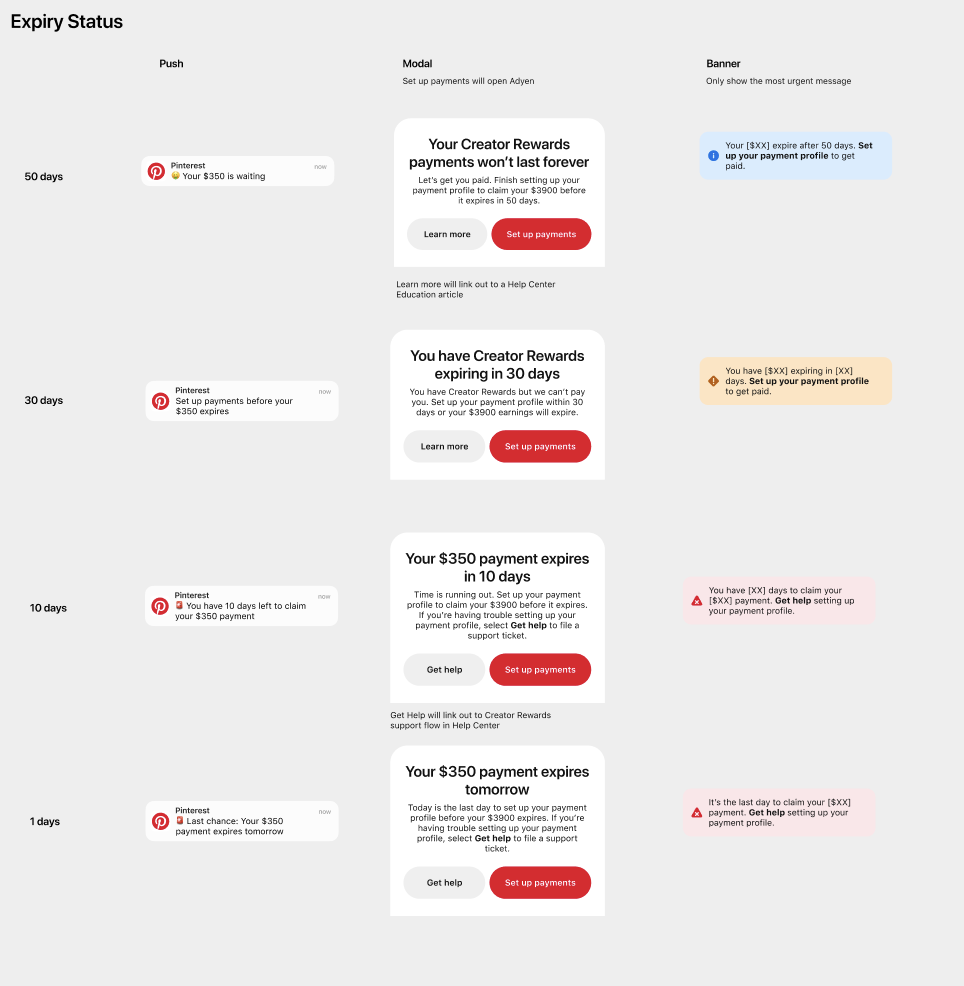

I worked closely with Legal and Creator Operations to ensure every message was accurate, compliant, and timed correctly. Tone moved from informational to increasingly urgent — but never alarmist.

I also negotiated with the support team to route creators directly to a support ticket form when funds were about to expire. This created a clear, actionable path for creators who genuinely couldn't resolve the issue themselves — and reduced creator frustration.

Every message named the specific dollar amount at risk — but how, where, and at what weight shifted deliberately as the deadline approached. Early messages led with information and opportunity. Later ones made the loss feel immediate and concrete, without tipping into alarm.

I built both tools myself — the writing GPT and the Figma plugin — in just a few days from start to finish. The goal was to give partners writing help inside the tool where they were already working, rather than asking them to context-switch to a separate product.

I started with a Custom GPT on chatgpt.com: no infrastructure, just prompt iteration until the model reliably applied Thumbtack's guidelines, asked the right clarifying questions before writing emails, and always returned full rewrites. Then I used Claude Code to build the Figma plugin, describing the behavior I wanted in plain language rather than writing code myself. The whole thing runs on Cloudflare Workers to keep the API key secure.

This project is also how I learned that the skills content designers already have — identifying problems, defining requirements, working with prompts, defining model behavior, and iterating on output — are exactly the skills that make someone good at building AI tools.

Building the infrastructure

for more inclusive products.

Across Meta and Pinterest, I've built programs that didn't exist before — not just guidelines on a page, but structures that give underrepresented voices ongoing influence on the products that affect them.

Disability Review Board

There was no forum for teams to get feedback from people with disabilities about the products and communications affecting them. Teams relied on a handful of individuals who were open about their disabilities — and many disability types had no designated reviewer at all.

I founded the Disability Review Board: a group of people with disabilities who provide input based on lived experience — from wheelchair representations in avatars to training for advertisers.

Inclusive Terminology Guide

There was no comprehensive guide to inclusive terminology at the company. I recruited contributors from across Pinterest, with each section led by someone from the relevant ERG to center lived experience. Coordinated reviews with PR, Legal, DEI, Learning & Development, and executives — launched in three months.